What AI Can (and Can't) Do in a COBOL Modernization Project

AI can dramatically accelerate COBOL modernization by mapping systems, surfacing business rules, translation, and testing, but it cannot replace human ownership of strategy, risk, regulatory intent, architecture, and final validation.

The promise is compelling: feed decades of COBOL into an AI, get clean Java out the other side. The reality is more nuanced. AI genuinely excels at parts of COBOL modernization that used to take armies of consultants years to complete, but other parts still require human judgment no model can replicate. Understanding that division clearly is what separates projects that succeed from the ones that quietly fail in production.

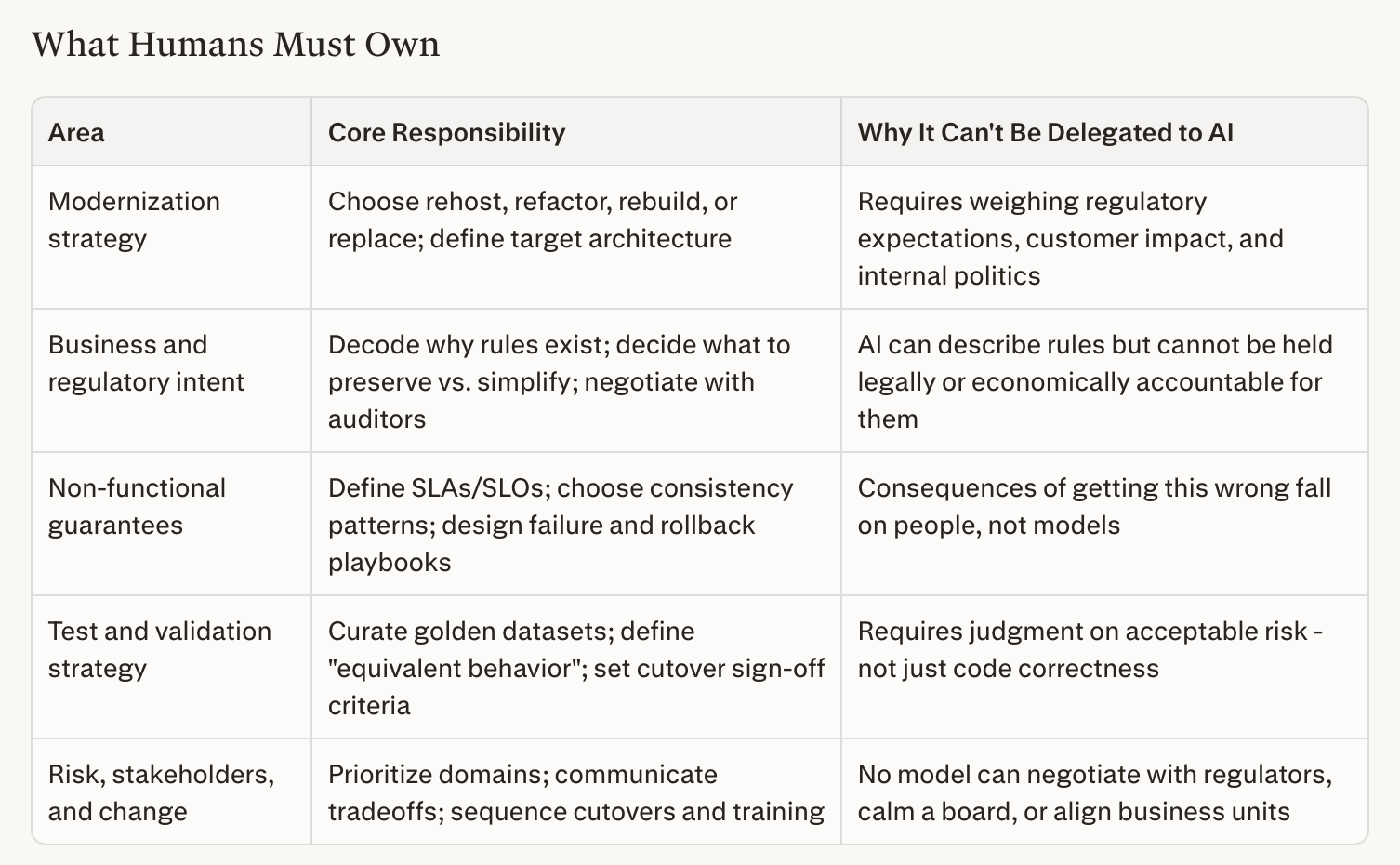

What Humans Must Own

1. Decide what you're really modernizing

Before any AI touches code, humans have to answer the most fundamental questions:

- What problem are we solving: cost, vendor lock-in, agility, risk, skills scarcity, or all of the above?

- Which systems are truly strategic, and which can be wrapped, retired, or replaced with SaaS?

- How much risk can we tolerate - payments vs. reporting are very different conversations.

Rehost, refactor, rebuild, or replace: only humans can weigh regulatory expectations, customer impact, and internal politics to make that call.

2. Interpret business and regulatory intent

COBOL systems encode decades of domain rules - tax corners, settlement practices, accounting conventions, and regulatory interpretations that evolved with the business. Domain SMEs, compliance teams, and product owners must:

- Decode why a rule exists, not just what the code does

- Decide which quirks are legally required, which are historical accidents, and which can be simplified

- Negotiate with regulators and auditors about what "equivalent behavior" actually means

AI can surface and describe rules at scale. It cannot be held accountable for their correctness in a legal or economic sense.

3. Own the non-functional guarantees

Mainframes earn their reputation on availability, throughput, predictable batch windows, and strong transactional semantics. Moving off COBOL means someone has to ensure those guarantees don't quietly disappear. Humans need to:

- Define SLAs and SLOs tied to real money and reputation

- Choose patterns to preserve consistency and recovery (saga vs. 2PC, eventual vs. strong consistency)

- Decide what happens under stress: partial failures, rollback rules, and operational playbooks

The consequences of getting this wrong fall on people, not models.

4. Design the test and validation strategy

AI translation failures often look fine in unit tests and only surface under real workloads: packed decimals mishandled as integers, REDEFINES clauses silently broken, date edge cases that trigger once a year. Humans should:

- Curate golden datasets covering real business scenarios, including ugly edge cases and historical incidents

- Define what "equivalent" concretely means: bit-for-bit identical outputs, or functionally acceptable differences?

- Set up parallel runs, reconciliation checks, and sign-off criteria for each cutover phase

AI can generate tests quickly - but humans decide what to test, how strict to be, and when it is safe to go live.

5. Manage risk, stakeholders, and change

No model can negotiate with your regulator, calm your board, or align feuding business units. Humans must:

- Prioritize which domains to modernize first based on risk and value, not just technical difficulty

- Communicate tradeoffs to non-technical stakeholders in language they trust

- Sequence cutovers, training, and decommissioning so operations and customer experience remain stable

AI can draft the deck. It cannot own accountability for what is in it.

Humans must own key decisions and accountability, not AI.

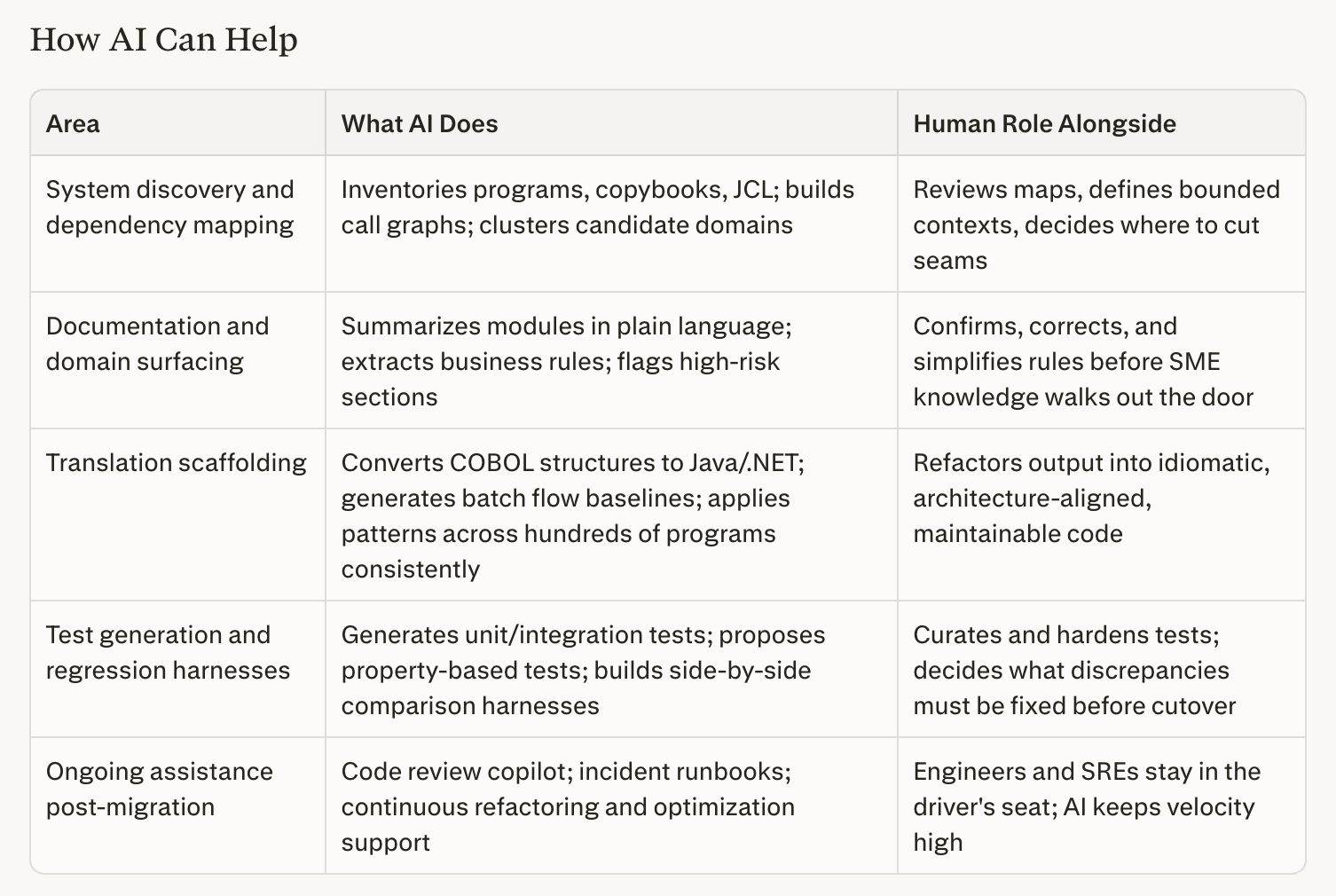

How AI Can Help

AI is not just useful here - in several areas it is demonstrably faster and more thorough than experienced human teams. Used well, it compresses years of groundwork into weeks.

1. System discovery and dependency mapping

A COBOL estate of thousands of programs, copybooks, JCL scripts, and data files that would take consultants months to inventory can be mapped by AI in days:

- Build a complete inventory of programs, copybooks, JCL, and data files

- Generate call graphs and dependency maps across millions of lines of code

- Cluster related components into candidate domains, giving architects a richer starting point than manual analysis would ever produce

Humans then review these maps to define real bounded contexts and decide where to cut seams.

2. Documentation and domain surfacing

Most mainframe shops suffer from "the code is the documentation." AI reverses this at scale and speed no human team can match:

- Summarize what each job, program, or module does in plain language

- Extract and group business rules, validation logic, and calculation formulas

- Highlight high-risk sections: unusual conditionals, complex financial math, or brittle cross-module dependencies

Domain experts confirm, correct, or simplify these rules - turning opaque legacy behavior into shared organizational knowledge before it walks out the door with a retiring SME.

3. Translation scaffolding and refactoring support

AI produces strong, consistent first-pass translations at a scale no human team can replicate:

- Convert COBOL data structures into equivalent types in Java, .NET, or other target languages

- Produce baseline implementations of procedural logic, batch flows, and IO layers

- Apply consistent patterns simultaneously across hundreds of similar programs

The output should be treated as scaffolding. Human engineers refactor it into idiomatic, architecture-aligned code that is maintainable long-term.

4. Test generation and regression harnesses

AI materially lowers the cost and time of building validation infrastructure:

- Generate unit and integration test cases from existing code paths and documentation

- Propose property-based tests derived from inferred business rules and invariants

- Build comparison harnesses that run old and new implementations side-by-side and surface discrepancies automatically

Humans curate and harden these tests, add critical domain scenarios, and make the final call on what needs to be fixed before cutover.

5. Ongoing assistance during and after migration

AI's value doesn't end at go-live:

- Code review copilot: flag likely bugs or non-idiomatic patterns in the new stack

- Ops support: help craft log queries, incident runbooks, and remediation playbooks

- Continuous modernization: assist in further refactoring, performance optimization, and feature evolution

The engineers and SREs who understand the system's real-world behavior remain in the driver's seat - AI keeps the velocity high throughout.

AI accelerates migration, but experts guide critical decisions.

The Right Mental Model

- Humans define why modernize, what success looks like, and what risks are acceptable - strategy, architecture, domain interpretation, and organizational change.

- AI accelerates how you get there - mapping, documenting, translating, and testing at a scale and speed no human team can match.

The projects that fail treat AI as a push-button solution requiring no human governance. The projects that succeed use AI aggressively for what it does best, while keeping human judgment firmly in place for the decisions that determine whether the modernized system actually works in the real world.